Must Know 10 Common Bad Data Cases and Their Solutions

Introduction

In the data-driven era, the significance of high-quality data cannot be overstated. The accuracy and reliability of data play a pivotal role in shaping crucial business decisions, impacting an organization’s reputation and long-term success. However, bad or poor-quality data can lead to disastrous outcomes. To safeguard against such pitfalls, organizations must be vigilant in identifying and eliminating these data issues. In this article, we present a comprehensive guide to recognize and address ten common cases of bad data, empowering businesses to make informed choices and maintain the integrity of their data-driven endeavors.

What is Bad Data?

Bad data refers to data with quality not fit for the cause of collection and processing. The raw data obtained directly after extraction from different social media sites or any other method is bad quality and raw data. It requires processing and cleaning to increase its quality.

Why is Data Quality Important?

Data serves numerous purposes in the company. Acting as the base of multiple decisions and functions, the compromise in quality affects the overall process. It is responsible for accuracy. Consistency, reliability and completeness of data is an important aspect requiring separate and detailed action to work on.

Top 10 Bad Data Issues and Their Solutions

Here are top 10 poor data issues that you must know about and their potential solutions:

- Inconsistent Data

- Missing Values

- Duplicate Entries

- Outliers

- Unstructured Data

- Data Inaccuracy

- Data Incompleteness

- Data Bias

- Inadequate Data Security

- Data Governance and Quality Management

Inconsistent Data

The data is defined as inconsistent in the presence of conflicting or contradictory values. The causes are varying types of results obtained after collection from different sources of data collection methods. It may also happen due to the misalignment of data from various time periods owing to multiple reasons like measurement errors, sampling methodologies and others.

Challenges

- Incorrect conclusions: Leads to drawing incorrect or misleading analysis that impacts the outcomes

- Reduced trust: It decreases the

- Wasted resources: Collection of data is a task followed by its processing. Working on inconsistent and wrong data wastes efforts, resources and time.

- Biased decision-making: The inconsistency results in biased data leading to the generation and acceptance of one perspective.

Solutions

- Be transparent about data limitations while presenting the data and its interpretation

- Verify the data sources before the evaluation

- Check data quality

- Choose the appropriate analysis method

Also Read: Combating Data Inconsistencies with SQL

Missing Values

There are various methods to identify missing or NULL values in the dataset, such as visual inspection, reviewing the summary statistics, using data visualization and profiling tools, descriptive queries and imputation techniques.

Challenges

- Bias and sampling issues: Leads to

- Misinterpretation: The misinterpretation is seen in variable relationships leading to unseen dependencies.

- Reduced sample size: It poses limitations while using size-specific software or function

- Loss of information: Results in a decrease in dataset richness and completeness.

Solutions

- Imputation: By using imputation methods to create complete data matrices with estimates generated from mean, median, regression, statistics and machine learning models. One can use single or multiple imputations.

- Understanding missing and poor data mechanism: Analyze the pattern of missing data, which may lie in different types, such as: Missing Completely at Random (MCAR),

- Weighting: Use weighting techniques to identify the impact of missing values on the analysis

- Collection: Adding more data may fill in the missing values or minimize the impact

- Report: Focus on the issue in the beginning to avoid bias

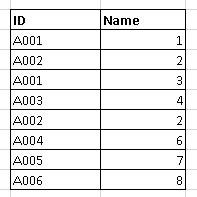

Duplicate Entries

Duplicate entries or redundant records are identified as the presence of multiple copies of data within the dataset. It occurs due to merging data, system glitches, data entry and handling errors.

Effect

- Inaccurate analysis: Besides general impact, the effect is seen on statistical measures with a consequence on data insights

- Improper estimation: These lead to over or underestimation of attributes

- Data integrity: Loss in accuracy and reliability due to wrong data

Challenges

- Storage: Increased and irrelevant requirement leads to increased costs and waste of resources

- Processing: Decreases due to an increase in load on the system impacting the processing and analysis

- Maintenance: Requires additional effort for maintenance and organization of data

Solutions

- Unique identifier: Input or set a unique identifier to prevent or easily recognize duplicate entries

- Constraints: Introduce data constraints to ensure data integrity

- Audit: Perform regular data audits

- Fuzzy matching: Utilize fuzzy matching algorithms for the identification of duplicates with slight variations

- Hashing: Helps in the identification of duplicate records through labeling

Outliers

Outliers are extreme values or observations seen lying far away from the main dataset. Their intensity can be large or small and may be rarely seen in data. The reason for their occurrence is data entry mistakes and measurement errors accompanied by genuine extreme events in data.

Significance

- Descriptive statistics: The impact is seen in the mean and standard deviation that affects the data summary.

- Skewed distribution: It leads to improper assumptions of statistical tests and models.

- Inaccurate prediction: The outliers adversely impact the machine learning models leading to inaccurate predictions

Mechanisms

- Enhanced variability: Outliers increase data variability, resulting in larger standard deviations.

- Effect on central tendency: They change the central value and hence change mean, median and other central data-based interpretations

- Bias in regression models: Outliers change the proportion and hence lead to biased coefficient estimates and model performance

- Incorrect hypothesis testing: They violate the assumptions of tests, lead to incorrect p-values and draw erroneous conclusions.

Solutions

- Threshold-based detection: State a specific threshold value according to domain knowledge pr statistical method

- Winsorization: Truncate or cap extreme values to reduce the impact of outliers

- Transformation: Apply logarithmic or square root transformations

- Modeling techniques: Use robust regression or tree-based models

- Outlier removal: Remove the values with careful consideration if they pose an extreme challenge

Unstructured Data

The data lacking a predefined structure or organization poses challenges to analysis and is called unstructured data. It results from changes in document formats, web scraping, lack of fixed data model, digital and analog sources and data collection techniques.

Challenges

- Lack of structure: The problem causes analysis using traditional methods

- Dimensionality: Such data is highly dimensional or contains multiple features and attributes

- Data heterogeneity: It can use diverse formats and languages, may have diverse encoding standards and makes integration more complex

- Information extraction: Unstructured data requires handling through Natural Language Processing (NLP), audio processing techniques or computer vision.

- Impact on data quality: Results in lack of accuracy and verifiable sources, causes problems with integration and generates irrelevant and wrong data.

Solution

- Metadata management: Use metadata for additional information for efficient analysis and integration

- Ontologies and taxonomies: Create these for a better understanding

- Computer vision: Process images and videos through computer vision for feature extraction and object recognition

- Audio and data processing: Implement audio processing techniques for transcription, noise and irrelevant content removal

- Natural Language Processing (NLP): Use advanced techniques for processing and information extraction from textual data

Data Inaccuracy

Human errors, data entry mistakes and outdated information comprise the data accuracy, which can be in the following forms:

- Typographical errors: Presence of transposed digits, incorrect formatting, misspellings

- Incomplete data: Missing data

- Data duplication: Redundant entries inflate or increase the numbers and skew statistical results

- Outdated information: Leads to loss of relevancy leading to incorrect decisions and conclusions

- Inconsistent data: Identified by the presence of different units in measurement and variable names and hindering the data analysis and interpretation

- Data misinterpretation: Data present in different contexts or imparting different perspectives or meaning

Solution

- Data cleaning and validation (most important)

- Automated data quality tools

- Validations rules and business logic

- Standardization

- Error reporting and logging added

Important of data cleaning and validation

- Cost saving: Prevents inaccurate results, thus saving expenditure on resources

- Reduced errors: Prevents the development of error-based reports

- Reliability: The data validation and cleaning process generate reliable data and hence results

- Effective decision-making: The reliable data aids in effective decision making

Data Incompleteness

The absence of attributes crucial for analysis, decision-making and understanding is referred to as missing key attributes. These generate due to data entry errors, incomplete data collection, data processing issues or intentional data omission. The absence of complete data plays a key role in disrupting comprehensive analysis, evidenced by multiple issues faced in its presence.

Challenges

- Difficulty in pattern detection: They lead to problems in detecting meaningful patterns and relationships within data

- Loss of information: The results lack valuable information and insights due to defective data

- Bias: The development of bias and problems with sampling is common due to the non-random distribution of missing data

- Statistical bias: Incomplete data leads to biased statistical analysis and inaccurate parameter estimation

- Impact on model performance: Key impact is seen in the performance of machine learning models and predictions

- Communication: Incomplete data results in miscommunication of results to stakeholders

Solutions

- Collect extra data: Collect more data to easily fill in the gaps in poor data

- Set indicators: Recognise the missing information through indicators and handle it efficiently without compromising the process and result

- Sensitivity analysis: Look for the impact of missing data on analysis outcomes

- Enhance data collection: Find out the errors or shortcomings in the data collection process to optimize them

- Data auditing: Perform regular audits to look for errors in the process of data collection and collected data

Data Bias

Data bias is the presence of systematic errors or prejudice in a dataset leading to inaccuracy or generation of results inclined toward one group. It may occur at any stage, such as data collection, processing or analysis.

Challenges

- Lack of accuracy: Data bias leads to skewed analysis and conclusions

- Ethical concerns: Generates ethical concerns when decisions are in favor of a person, community or product or service, helping them

- Misleading prediction: biased data leads to unreliable predictive models and inaccurate forecasts

- Unrepresentative samples: It impacts the process of generalizing the findings leading to a broader population

Solution

- Bias metrics: Use bias metrics for tracking and monitoring bias in the data

- Inclusivity: Do add data from diverse groups to avoid systematic exclusion

- Algorithmic fairness: Implement ML algorithms capable of bias reduction

- Sensitivity analysis: Perform it to assess the impact of data bias on analysis outcomes

- Data auditing and profiling: Audit and conduct data profiling regularly

- Documentation: Clearly and precisely document the data for transparency and to easily address the biases

Inadequate Data Security

The data security issues compromise data integrity and the organization’s reputation. The consequences are seen through unauthorized access, data manipulation, ransomware attacks and insider threats.

Challenges

- Data vulnerability: Identification of vulnerable points

- Advanced threats: Sophisticated cyber attacks require advanced and efficient management techniques

- Data privacy regulation: Ensuring data security while complying with evolving data protection laws is complex

- Employee awareness: Requires educating each staff member

Solutions

- Encrypt: Requires encryption of sensitive data at rest and in transit for protection from unauthorized access

- Access controls: Implement strictly controlled access for the employees based on their roles and requirement

- Firewalls and Intrusion Detection System (IDS): Deploy security measures with built-in firewalls and installation of IDS

- Multi-factor authentication: Put in the multi-factor authentication for additional security

- Data backup: It mitigates the impact of cyber attacks

- Vendor security: Assess and enforce data security standards for third-party vendors

Data Governance and Quality Management

Data governance concerns policy, procedure and guideline establishment to ensure data integrity, security and compliance. Data quality management deals with processes and techniques to improve, assess and maintain the accuracy, consistency and completeness of poor data for reliability enhancement.

Challenges

- Data silos: Fragment data is difficult to integrate and maintain consistency

- Data privacy concerns: Data sharing, privacy and handling sensitive information poses a challenge

- Organizational alignment: Buy-in and alignment is complex in large organizations

- Data ownership: Different to identify and establish ownership

- Data governance maturity: It requires time for conversion from ad-hoc data practices to mature governance.

Solutions

- Data improvement: It includes profiling, cleansing, standardization, data validation and auditing

- Automation in quality: Automate the process of validation and cleansing

- Continuous monitoring: Regularly monitor data quality and simultaneously address the issues

- Feedback mechanism: Create a mechanism such as forms or ‘raise a query’ option for reporting data quality issues and suggestions

Conclusion

Recognizing and addressing poor data is essential for any data-driven organization. By understanding the common cases of poor data quality, businesses can take proactive measures to ensure the accuracy and reliability of their data. Analytics Vidhya’s Blackbelt program offers a comprehensive learning experience, equipping data professionals with the skills and knowledge to tackle data challenges effectively. Enroll in the program today and empower yourself to become a proficient data analyst capable of navigating the complexities of data to drive informed decisions and achieve remarkable success in the data-driven world.

Frequently Asked Questions

A. The four common data quality issues seen in wrong data are the presence of inaccurate, incomplete, duplicate and outdated data.

A. The factors responsible for poor data quality are incomplete data collection, lack of data validation, data integration issues and data entry errors.

A. Bad data is seen to comprise duplicate entries, missing values, outliers, contradictory information and other such presence.

A. The five characteristics of data quality are accuracy, completeness, consistency, timeliness and relevance.